|

12/14/2023 0 Comments Ios text to speech api

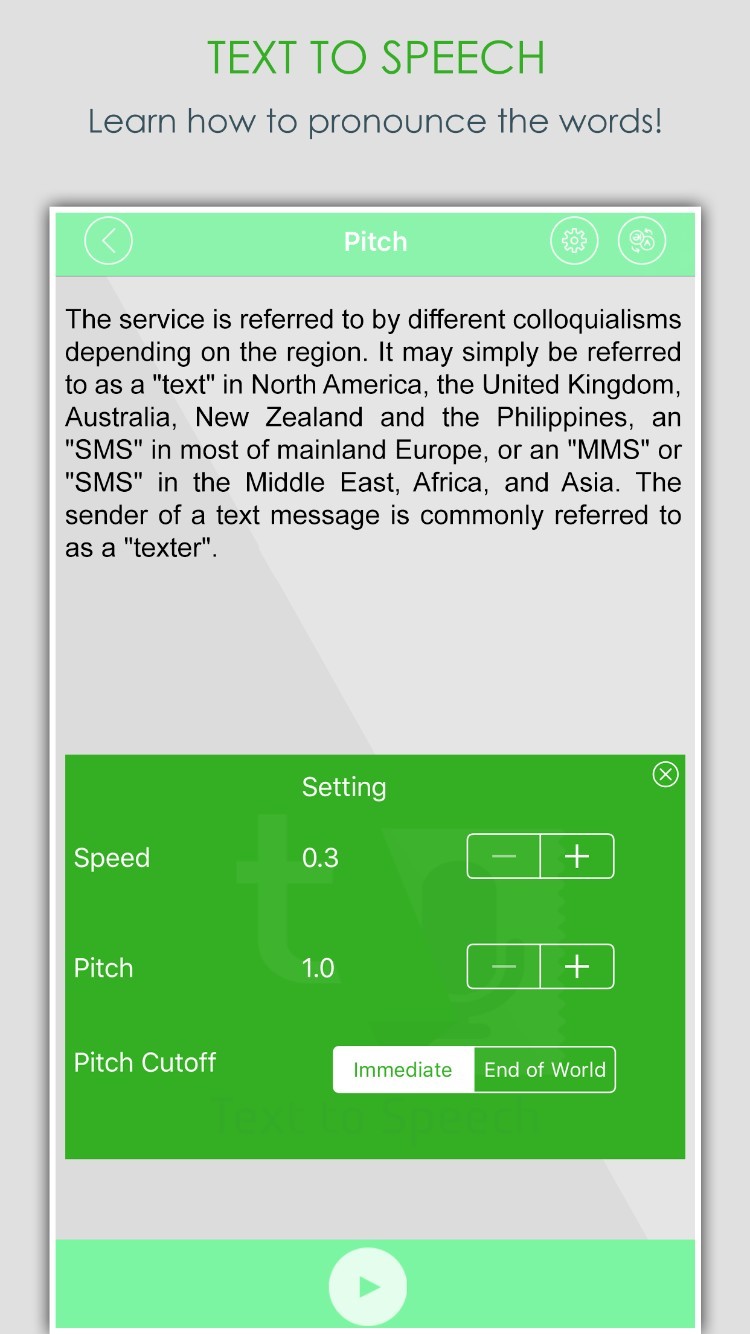

Other platforms may not support some of these features, or only in an incompatible way. When it comes to text-to-speech, some platforms support choosing a voice, changing the pitch or speech rate, customize pronunciation with markup in the text to speak etc. A specific feature may be supported on one platform, but not on another. Choosing a feature setĪ common issue with abstracting platform differences is that you must decide on a feature set. If you are only interested in the end result, then you can stick to the first part of this post and bail when we get to the implementation details. You can find the source code on GitHub as part of the JustAddCode repository. It works on Windows, macOS, iOS and Android.

In particular, we present a small Delphi library to add cross platform text-to-speech to your app. If you have found this article useful, why not share it with your followers? And if you have any questions, I’ll be happy to answer them in the comments.This post is a small exercise in designing a cross platform abstraction layer for platform-specific functionality. It is widely supported and can enhance the accessibility or functionality of modern web applications on countless devices. Using the speech synthesis half of the Web Speech API is easy, except for a few minor quirks. I want to add furigana ( 東京 とう きょう ) soon and see if text-to-speech implementations respect them when using annotations. Kanji (東京) pose a problem, as they have different readings. Many travelers don’t know how to pronounce Japanese phrases correctly, so text-to-speech is a helpful addition to this web application. If you want a live example of the Speech Synthesis API you can open my Japanese Phrasebook app. function speak ( text ) Use of speech synthesis in the ⛩ Japanese Phrasebook If not, there are a few pitfalls I have encountered myself and will describe in the next sections. If you have selected a voice you can define a SpeechSynthesisUtterance, which you can pass to the speak method of the SpeechSynthesis API.ĭepending on your operating system and device you should already be able to hear your computer talk. } Tell the speech synthesis what (and how) to read

Usually there aren’t many to choose from, except when looking for English voices. You can let the user choose a voice or iterate the list to find a voice for a specific language. The getVoices method returns a list of all voices that are available on the current device.Ī voice has a name, for example Kyoko, and a BCP 47 language tag 5, for example ja-JP, as well as a few other attributes. The speechSynthesis interface is a property of the window object, if supported by the browser. Init speech synthesis and select one of the current device’s voices While speech recognition has very limited browser support 3, speech synthesis is supported by all major desktop browsers, iOS Safari, Chrome Android and Samsung Internet 4. These are available via the SpeechRecognition and SpeechSynthesis interfaces. The Web Speech API enables web developers to incorporate speech recognition and synthesis into their web applications. – Agnes, voice in Apple’s text-to-speech since before Mac OS X What is the Speech Synthesis API? “Isn’t it nice to have a computer that will talk to you?” .png)

In modern browsers the Web Speech API allows you to gain access to your device’s speech capabilities, so let’s start using it! Alexa, Cortana, Siri and other virtual assistants recently brought speech synthesis to the masses. In the 1990s Apple already offered system-wide text-to-speech support. Speech synthesis has come a long way since it’s first appearance in operating systems in the 1980s.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed